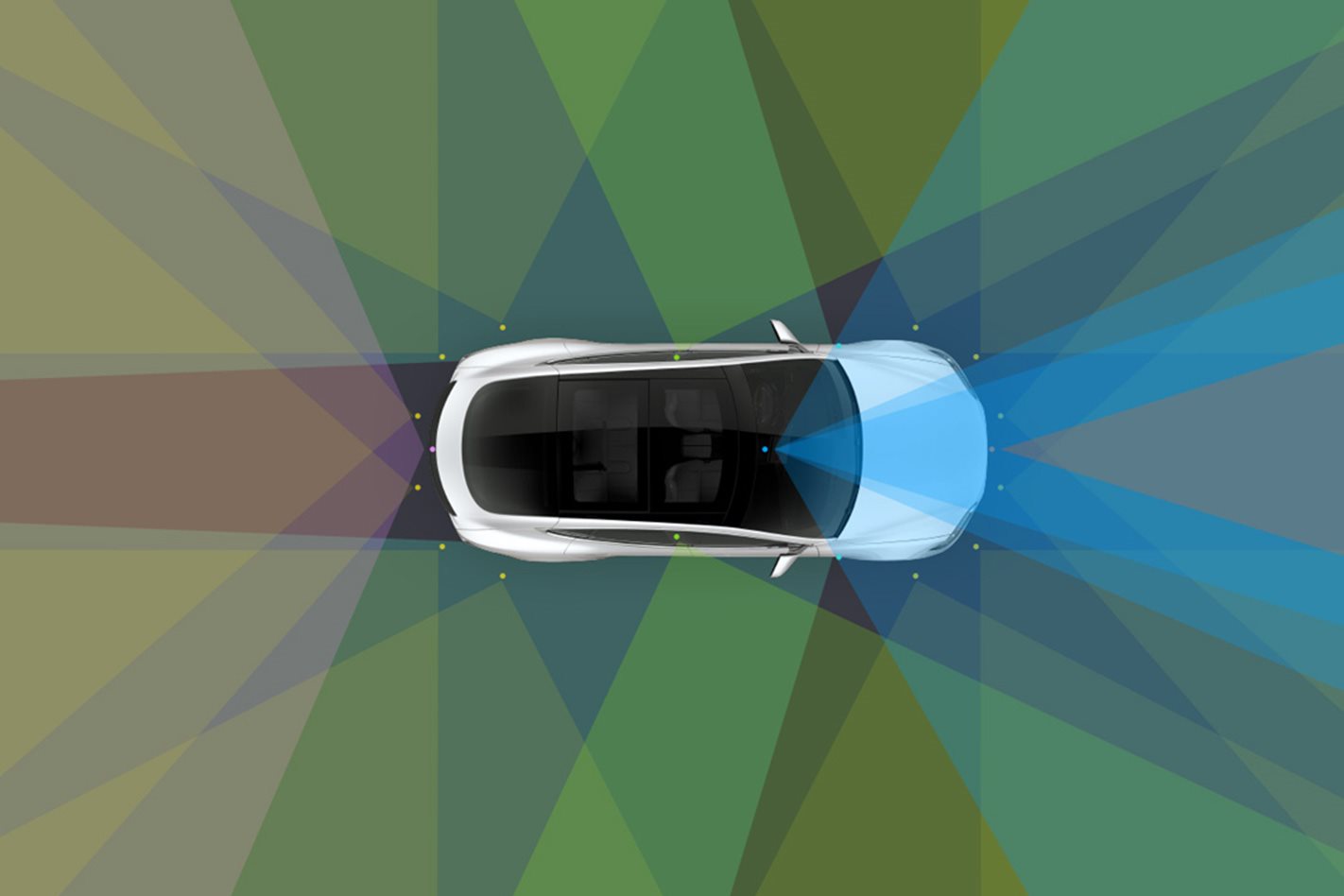

WHAT does a Tesla “see” while it is driving down the road? The electric carmaker has captured a video detailing what its Autopilot driver-assist technology sees in different conditions – without any driver input.

What makes this video interesting are three side panels showing what the different cameras that help control the Tesla Model S used in the video see in real time, and how the car responds to them.

The cameras help the car read and react to everything from poorly defined unsealed road edges to stop signs, allowing it to safely negotiate blind intersections and stop for side traffic and pedestrians.

While we shouldn’t underestimate the human mind’s ability to process visual information and react accordingly, it would be impossible for any driver to be able to see and process everything happening around the entire car.

It’s worth noting, though, that while this is at the cutting edge of autonomous technology even for Tesla, there is still a way to go before such systems process the information with the equivalent of human logic.

For example, the car stops for a couple of joggers on the side of the road instead of passing them safely, and it did pause in the middle of intersections a few times.

But it does demonstrate how far autonomous driving technology has come. It also shows that while the software still needs refining before Level 5 autonomy becomes a reality, the hardware fitted to the car is already up to the task.

The video is best viewed on full screen. You might want to pause it from time to time to keep up with what the cameras are seeing and how they react to different situations.