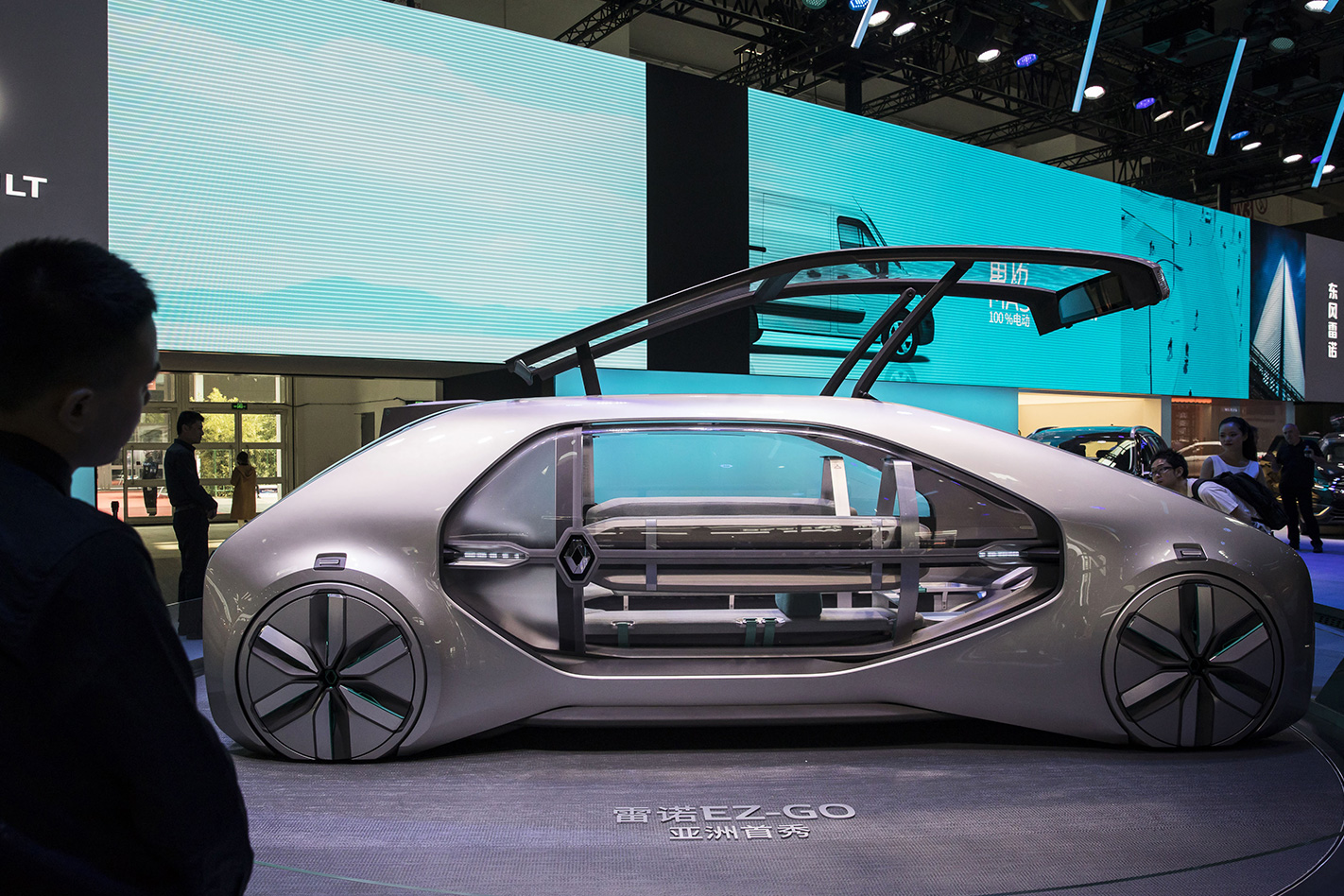

YOU are sitting in a self-driving car travelling down a road when suddenly a pregnant woman steps out in your path.

While the autonomous system is working, the brakes have failed. A crash is now unavoidable.

Should the vehicle take out the woman, or swerve into a barrier, putting the occupants at risk?

That was the core issue of a study conducted by researchers from the Massachusetts Institute of Technology (MIT), called The Moral Machine.

As part of the research, members of the public were asked to take part in a survey in which they decided what the outcome was for an unavoidable accident. Should an autonomous vehicle prioritise saving a woman over a man? What about a rich person over a poor person? A criminal or a dog? A group of children or a group of doctors?

Putting a modern spin on the classic philosophical trolley problem, MIT’s research took in over 40 million decisions, made by millions of individuals, from 233 countries. The results of the study have been reported in the journal Nature, and make for an interesting insight into what ethics the world wants self-driving cars to have.

Dedmond Awad led the team of researchers, and stated there were some common themes amongst the millions of decisions.

“The strongest preferences are observed for sparing humans over animals, sparing more lives, and sparing young lives,” Awad and colleagues report.

“Accordingly, these three preferences may be considered essential building blocks for machine ethics, or at least essential topics to be considered by policymakers.”

According to Awad, the four most spared characters in the game were the baby, the little girl, the little boy, and the pregnant woman.

Other than those universal choices, Awad and his team determined choices were influenced by social, cultural and perhaps even economic factors, noting a split between “individualistic cultures and collectivistic cultures” – with North America and Europe falling into the former, and Asian cultures the latter.

According to researchers, individualistic cultures “emphasise the distinctive value of each individual”, and placed an emphasis on saving the highest number of characters, whereas collectivistic cultures “emphasise the respect that is due to older members of the community” and placed less of an emphasis on saving the young.

The same split also applies to wealthy characters, with North America and Europe preferring to save them over poorer characters.

One of the more interesting results of the study, was the fact people would prefer their autonomous vehicles hit a criminal than a dog. Cats didn’t fare too well either, sitting near the bottom of the priority list.

Awad and colleagues ended their report on an ominous note. While debate surrounds when exactly a fully autonomous car will finally exist and take to our roads, it is seeming like an inevitability, and the team’s research raises some difficult ethical questions about how these vehicles will be programmed.

“Never in the history of humanity have we allowed a machine to autonomously decide who should live and who should die, in a fraction of a second, without real-time supervision,” they conclude.

“We are going to cross that bridge any time now, and it will not happen in a distant theatre of military operations; it will happen in that most mundane aspect of our lives, everyday transportation. Before we allow our cars to make ethical decisions, we need to have a global conversation to express our preferences to the companies that will design moral algorithms, and to the policymakers that will regulate them.”