As we charge headlong towards a robotised future, car interiors are quickly becoming the arena for automakers to create their point of difference.

The Consumer Electronics Show (CES) in Las Vegas has seen car manufacturers flood its halls in recent years, showing off innovations from the relatively realistic to the thoroughly far-fetched.

Below is a list of five new automotive technologies seen at CES this year, some that could turn up in production at any moment, and at least one that’s a little further away.

CORNING GORILLA GLASS

Heard of Gorilla Glass? It’s the stuff smartphone manufacturers like to use for the faces of their handheld devices. It’s tough, it’s light, and it turns out it’s also suitable for use in cars.

At CES the company had Gorilla Glass panels with digital displays built directly into them. The integrated display is transparent enough to see through when nothing is shown, and can overlay information inside the glass. It’s also able to dynamically darken for tinted windows on demand.

Its maker, Corning, has already started supplying windscreens for the Ford GT as the glass by itself is claimed to be one third lighter, two times stronger and three times better for optics, which makes it perfect for head-up displays.

Gorilla Glass can also be formed into shapes with tight curves for touchscreens, instrument clusters and mirrors. Corning’s demo car had Gorilla Glass everywhere – windscreens, windows, sunroof, dashboard, steering wheel and even in the door panels.

BOSCH ACTIVE CAR PARK MANAGEMENT

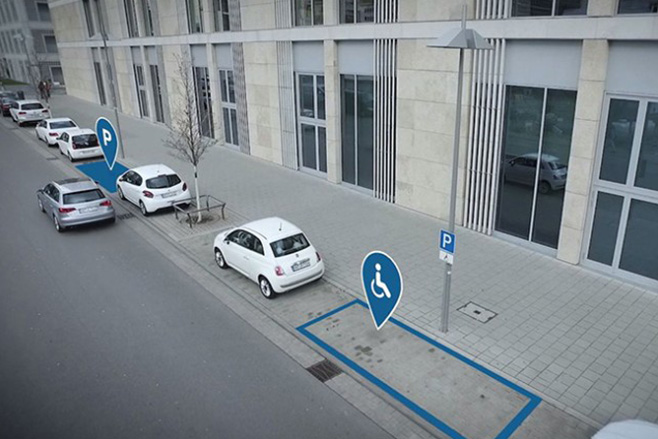

Finding a car park can be miserable. Bosch understands this and is making it possible for navigation systems to guide drivers directly to a park.

Cars will constantly scan the side of the road in city areas using the same parking sensors already found in a huge number of cars. As the sensors detect an area large enough to park a car in, the location information is uploaded to the cloud, where navigation systems in other cars can retrieve it.

In practice, a driver would enter destination info into a GPS system and the guidance would take them straight to the car park closest to it. Three manufacturers, including Mercedes-Benz, are working with Bosch to implement this system in the US.

As more cars with basic autonomous functions join the road, the system would also work with parking complexes like those at shopping centres. In that scenario a driver would pull up at a ‘drop zone’ near the car park entrance, get out, and the car finds itself a spot using information from the Bosch car park management system.

It’s different to other self-parking systems seen in cars with current driver assist functions, in that it doesn’t require as much hardware in the vehicle because the car park sensors do most of the work. The first car park fitted with the system will open in Germany in 2018, also in partnership with Mercedes-Benz.

MERCEDES-BENZ CLOUD MAPS

As cars collect more information about their environment in order to drive themselves, so too will they help other cars and drivers. Real-time maps, updated over the air by sensor data from cars will give more detailed and accurate information about the roads we drive on than ever before.

High resolution, three dimensional scanning that can determine what objects are, even down to distinguishing between a bicycle and a motorcycle is in development at Mercedes-Benz. The brand plans to have its cars sharing that information in the near future.

Satellite navigation systems will refer to an onboard map and realtime information from the cloud, so that autonomous functions will work when network connectivity is not possible. High-definition information about traffic, road works and other new developments will be relayed with centimetre accuracy.

This information will not only improve general GPS routing, but also enable fully autonomous driving, by telling the car when to pre-emptively adjust speed for tighter radius corners and turn offs based on map data, well before the onboard sensors have scanned the road ahead.

NVIDIA ARTIFICIAL INTELLIGENCE

CES was dominated by two things: virtual reality, and artificial intelligence. Nvidia is one of the brands charging ahead with both, and announced at CES that it is working with Mercedes-Benz to bring artificial intelligence to a mainstream production car within a year.

So what does AI in a car look like? In essence, the car will be able to help with tasks simply by asking it to do so via voice, or automatically via predictions based upon your behaviour.

The system is also called machine or deep learning, and is the concept behind systems like Siri, which can interpret requests based on contextual understanding. For a car application, the vehicle can get to know what you do on a regular basis, and suggest actions it thinks you might be looking for, before you ask for them.

For example, before you ask the car to tell you where the nearest petrol station is, it will know that you’re getting low on fuel, and will find the petrol station with the cheapest fuel at that time, that is going to be nearest to the route you normally drive based on your regular movements or the destination you have input.

Mercedes and Nvidia want to give you 26 hours a day. When you sit in the car, its in-car office functions will use artificial intelligence to help you work on your emails and calendar, and you save time in the office. It’s an incremental approach, and the capabilities of this are only just beginning to unfold.

BMW HOLOACTIVE TOUCH

Now this is one we’ll probably be waiting some time to see in a BMW 3 Series, but BMW revealed a system called HoloActive touch, and it’s amazing to note that this virtual touchscreen technology with haptic feedback already exists, and is not just science fiction.

A full-colour holographic image is reflected off an LCD display buried inside the centre console, and ‘floats’ in mid-air, within direct reach of the driver. The hologram displays buttons and other car controls, which can be interacted with by pressing with a finger, or using other intuitive gestures.

What’s most impressive/bizarre is the array of ultrasonic speakers, which beam a jet of inaudible sound that ‘buzzes’ the fingertip of the user, so that HoloActive Touch can acknowledge the button input. It’s a bizarre thing to experience, but it actually works.

BMW did this in order to move the dashboard and centre console further away from passengers, and open up the interior to feel like a living room. It’s all part of its i Inside Future concept, and you can read more about it here.